The Missing Commit Layer in AI Governance: Why “Safety” Isn’t Enough

Action Governance is the discipline.

The Commit Layer is where it lives.

Formation governs what systems see and say. Forensics explains what happened.

The missing layer decides what is allowed to execute at all.

The one line you’ll remember

If your governance cannot refuse an action before it executes, then it is not yet a full execution control.

The Governance Failure Hiding in Plain Sight

AI governance has become fluent in two things:

- Formation controls

What the system is allowed to see and say: data controls, approved model endpoints, guardrails, safety filters, policy docs. - Forensics

What the system already did: logs, dashboards, traces, incident reports, after-action reviews.

Both are necessary. Neither answers the question that matters when AI touches the real world:

“Who was allowed to let this action happen?”

That question isn’t academic. It’s underwriting. It’s regulatory. It’s board-level accountability.

Because the highest-cost failures aren’t “bad outputs.” They’re irreversible commits:

- filing under the wrong authority

- sending protected data externally

- approving a binding decision without supervision

- moving money

- deleting or changing critical records

Once those happen, you can’t “un-send” them. You can only explain them.

The Gap: We Govern Intelligence, Not Execution

Most stacks treat AI risk like an information problem:

- “Did the model see something it shouldn’t?”

- “Did it say something it shouldn’t?”

- “Can we observe and reconstruct what happened?”

But modern AI isn’t just generating text. It’s increasingly:

- calling tools

- triggering workflows

- reaching external destinations

- executing actions under human or system authority

When AI becomes action-capable, the risk stops being informational and becomes authority-bound:

A valid identity, a capable agent, and an available execution path can still produce an irreversible mistake. Guardrails shape behavior, IAM governs access, and GRC defines policy, but none of them by themselves decides whether this action may execute under this authority, in this context, right now.

IAM/RBAC answers: “Can this identity access the tool?”

The Commit Layer answers: “May this action bind the institution right now — in this context, under this authority?”

Access is permission to enter. The Commit Layer is authority to commit. A valid login can still produce an unauthorized filing/payment/change — unless a gate can refuse before irreversibility.

This is the missing layer.

Introducing the Commit Layer

The

Commit Layer is the missing control point in AI governance:

the execution-boundary checkpoint that can answer, before an action runs:

“Is this specific actor allowed to take this specific action, in this context, under this authority, right now?”

It is not “another dashboard.”

It is not “better logging.”

It is not “more policies.”

It is a structural gate placed immediately upstream of irreversible actions—the point where the system can still refuse.

Workflows route work. The Commit Layer governs execution.If an irreversible step can execute without a prior verdict, you don’t have a Commit Layer — you have advisory guidance.

The Commit Layer has only three legitimate outcomes

or governed actions, a real commit layer returns one of three verdicts:

- ✅ Approve — action may execute

- ❌ Refuse — action is blocked (fail-closed)

- 🟧 Supervised Override — action may proceed only with named human accountability

Anything else (“warn and proceed,” “best effort,” “we’ll log it”) is not action governance. It’s hope with telemetry.

Why “Safety” Isn’t Enough

Model safety and guardrails are about language and behavior:

- preventing disallowed content

- reducing hallucinations

- filtering dangerous prompts

- constraining what the model is allowed to say

But safe text can still produce unsafe actions.

A model can generate a perfectly polite, compliant-looking message… and still:

- send it to the wrong recipient,

- file it in the wrong venue,

- sign it under the wrong authority,

- commit it to the wrong system.

The underwriting problem is not “did the model sound safe?”

It’s:

Did the system have authority to do what it just did—and can you prove it?

That is commit-layer territory.

Where the Commit Layer sits

Think of AI governance as two stacks plus one missing layer:

Formation Stack (what the system may know and say)

- DLP / data governance

- approved AI endpoints / LLM gateways

- model monitoring / evaluation

- output safety guardrails

Commit Layer (what the system may execute)

- pre-execution authority gate

- approve/refuse/override

- fail-closed semantics

Forensics Stack (what already happened)

- logs / dashboards / traces

- incident response / audits

- reporting and reconstruction

Formation governs inputs and outputs. Forensics governs explanations. The Commit Layer governs reality.

What The Gate Evaluates at Decision Time

The Commit Layer doesn’t need your prompts. It doesn’t need your chain-of-thought.

It needs the minimum context that any enterprise already has:

- Who is acting?

- Where are they acting?

- What are they trying to do?

- How fast is it intended to move?

- Under whose authority / consent?

If any anchor is missing or ambiguous, a real commit layer fails closed.

Fail-closed isn’t harsh. It’s the definition of authority.

Fail-closed keeps the institution inside mandate. Supervised Override preserves speed with accountability — through the same pre-execution authority gate.

Speed doesn’t require bypassing control — it requires an accountable escalation path.

What This Kind of Runtime Must Actually Do

Once you name the Commit Layer, the next question becomes:

“What kind of system implements it?”

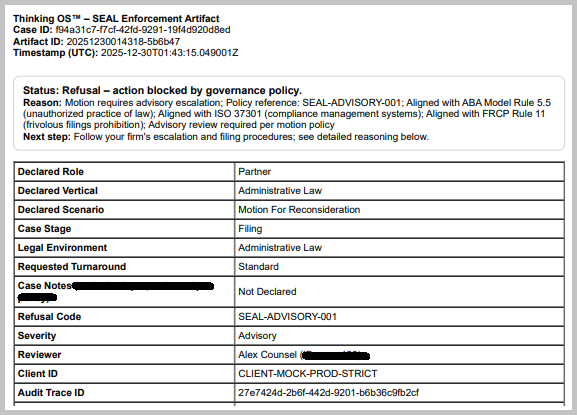

In practice, this requires a runtime enforcement layer that can deterministically permit, refuse, or escalate a governed action at the execution boundary, and produce a verifiable decision artifact for each outcome.

A sealed, execution-time governance runtime that:

- is operator-agnostic (humans, agents, systems hit the same gate),

- can refuse before execution,

- supports supervised override with named accountability,

- emits sealed decision artifacts for every governed verdict,

- is non-bypassable when wired (and explicitly out of scope when not).

Truth test: if, within a governed workflow, the same irreversible action can execute without a prior verdict, the “pre-execution authority gate” is advisory — not the commit boundary.

A real Commit Layer is

testable at the boundary where irreversible execution begins (approve / refuse / supervised override, with receipts).

The Missing Evidence Surface: “Bad Actions That Never Happened”

Here’s the part most people miss:

Without a Commit Layer, your organization has no structured proof of prevention.

You only have post-hoc narratives.

Logs reconstruct. Sealed decision artifacts prove. A log is disputable; a sealed receipt is integrity-checkable — including outside the vendor’s system.

With a Commit Layer implemented as a runtime enforcement layer, you generate a new class of evidence:

- every refused high-risk intent (a prevented loss event),

- every supervised override (accepted risk with accountability),

- every approval (authorized execution under policy).

This produces a dataset insurers and regulators can reason about:

The most valuable risk evidence is the catastrophe that almost happened—captured before harm, with a receipt.

This is the kind of evidence surface that makes a prevented-loss ledger possible.

No gate → no ledger.

No ledger → no proof of prevention.

Sealed Artifact, Not a Screenshot

A governed runtime should not leave you with screenshots or narratives. It should leave you with a sealed decision artifact: a tenant-owned record of who acted, what was attempted, which policy context applied, what decision was returned, and when. Public examples can be redacted or synthetic; what matters is that the evidence surface is structured, integrity-verifiable, and reviewable outside the vendor’s storytelling.

What Boards, Insurers, and Regulators Should Ask (the truth test)

If someone claims they “do AI governance,” ask one question:

Where is your Commit Layer?

Show the exact point where an irreversible action can be refused before it executes.

Then ask the follow-ons:

- What happens on ambiguity?

Refuse, override, or “warn and proceed”? - What is your evidence surface?

Sealed artifacts you can produce—or logs you’ll argue about later? - What is your coverage map?

Which high-risk workflows are wired through the gate, and which aren’t? - Who owns authority?

Is it derived from client identity/policy systems, or reinvented inside a vendor tool?

If your last line of defense is post-hoc logging, then your control posture still depends too heavily on reconstruction after the fact.

The Takeaway

AI governance cannot stop at formation and forensics.

When AI can act, governance must include the missing layer that decides what can execute.

Safety helps reduce bad outputs. A pre-execution authority gate helps prevent unauthorized execution. Logs help explain harm after the fact. A governed runtime with sealed artifacts helps prove what was prevented and what was allowed. That is the missing execution-time layer many organizations still do not have.

That’s the category shift.