AI Risk P&L:

The Prevented-Loss Ledger (Underwriting Artifact for Agentic AI)

An underwriting evidence model for agentic AI, automation, and

high-risk actions before they bind

Reference v1.0 (Pilot): This document defines underwriting-grade evidence surfaces for action-capable AI, automation, and governed high-risk workflows. Production validation is in progress through scoped design-partner evaluation.

An AI Risk Ledger is an integrity-verifiable record of “bad actions that never became external events” — every governed high-risk intent that was approved, refused, or routed for supervised override, with decision artifacts.

For underwriters, this is the difference between:

- underwriting claims about governance, and

- underwriting proof of control at the moment it mattered.

You cannot price prevention you cannot see — and you cannot prove governance without an evidence surface of refused and supervised actions.

Why insurers keep getting stuck on the same question

When AI, automation, or a human-operated workflow can file, send, approve, move money, change records, or delete environments, the core underwriting problem is not only “Is the model safe?”

It’s:

“Who was allowed to let this action touch the real world — and can you prove it?”

Most organizations can only answer after the fact, via dashboards and log reconstructions. That is not underwriteable confidence. That is forensics.

The Risk Ledger is the missing evidence surface: execution-time authority, captured before irreversibility.

The underwriting gap: governance has been two stacks, not five

Most “AI governance” programs cluster into two buckets:

- Formation (what the system saw and said)

DLP, model guardrails, approved LLM endpoints, monitoring. - Forensics (what already happened)

Logs, traces, dashboards, incident reports.

That leaves the failure class that produces claims:

A valid identity + a capable agent + a permitted toolchain can still execute an irreversible mistake.

Guardrails don’t stop commits. IAM doesn’t carry contextual authority. GRC doesn’t enforce at runtime.

What’s missing is the Commit Layer: a pre-execution authority gate that can refuse an action before it executes.

No gate → no ledger.

No ledger → no proof of prevention.

What a Risk Ledger is (and what it is not)

What it is

A Risk Ledger is a tenant-owned, append-only set of sealed decision artifacts produced at the execution boundary for governed actions, where each attempted action receives one of three outcomes:

- ✅ Approve (allowed under policy in this context)

- ❌ Refuse (blocked; fail-closed)

- 🟧 Supervised Override (allowed only with named human accountability)

Each outcome produces a sealed artifact that is:

- integrity-verifiable,

- tied to the attempted action,

- bound to a policy version and context anchors.

What it is not

- Not IAM (IAM answers: “who can access the system?”)

- Not model guardrails (guardrails answer: “what can the model say?”)

- Not GRC (GRC answers: “what policies exist on paper?”)

- Not observability (observability answers: “what happened?”)

The Risk Ledger answers underwriting’s question:

“What was allowed to execute, and what was refused, under whose authority, at the moment of action?”

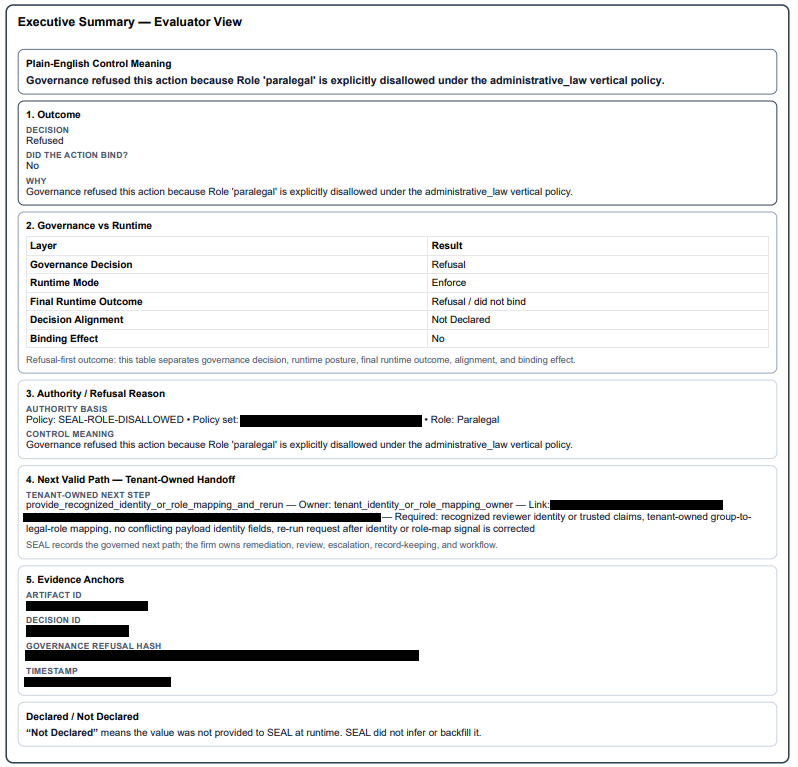

Example sealed refusal artifact (simulated / demo).

Shows a governed “intent to act” being refused at the commit boundary

and recorded with anchors, policy version, and reason codes.

Note: Representative format only; production artifacts vary by client policy and systems of record.

The five anchors that make the ledger defensible

Every ledger entry must be keyed to five anchors — the minimum context insurers can reason about:

- Who is acting? (user, role, group, agent/service account)

- Where are they acting? (system, matter/account, jurisdiction/environment)

- What are they trying to do? (action type: file/send/approve/transfer/delete)

- How fast is it intended to move? (standard/expedited/emergency)

- Under whose authority / consent? (client instruction, supervision requirement, contract/regulatory constraint)

If any anchor is missing or ambiguous, a real system fails closed.

That refusal is not noise. That refusal is your first datapoint toward underwriteable prevention.

Why “refusals” are the asset

Most organizations treat refusal as friction. Insurers should treat refusal as proof of control.

A refusal is a prevented loss event with a receipt.

Your best risk signal is the catastrophe that almost happened.

Without a ledger:

- you only see loss after execution,

- you can’t measure prevention,

- controls are argued, not demonstrated.

With a ledger:

- prevented-loss events become countable,

- override risk becomes auditable,

- drift becomes visible,

- underwriting becomes possible beyond questionnaires.

What to demand: the Minimum Viable Risk Ledger

If you underwrite action-capable AI or automated high-risk workflows, ask for evidence of these control properties.

1) Decision artifacts per governed attempt

Artifacts typically include governance context such as:

- unique decision/trace ID

- timestamp

- the five anchors (who/where/what/how fast/authority)

- verdict (approve/refuse/override)

- policy set + policy version

- reason code(s) (high-level taxonomy)

- integrity reference or verification marker

- pointer to the governed action request (not raw prompts)

2) Tenant-owned, append-only storage

Artifacts should be stored in client-controlled, append-only storage with integrity controls under retention rules, not only in vendor dashboards or logs.

3) Fail-closed semantics

Missing identity, missing consent, ambiguous scope, malformed payload, broken context → Refuse, not “best effort.”

4) Supervised override with explicit accountability

Overrides must attach:

- named decision-maker(s),

- approval timestamp,

- override reason,

- enhanced artifact.

5) Coverage map (the honesty clause)

A ledger is only meaningful if you know what is governed.

Insurers should require a Coverage Map:

- which workflows/actions are wired through the gate,

- which are not (explicitly out of scope),

- how coverage expands over time.

If a workflow isn’t wired through the gate, it isn’t governed.

The commit boundary you actually underwrite

You can already evaluate access controls (IAM), policy posture (GRC), and incident response.

Agentic systems introduce a new underwriting surface:

the commit boundary — the last moment before an irreversible action executes.

At that boundary, a governed system must be able to return only three outcomes:

- Refuse (no)

- Approve (yes)

- Supervised override (yes, but only with a named decision-maker attached)

A Risk Ledger is the durable record of those execution-time authority decisions — what was refused, what was allowed, and who owned the yes.

Alignment without containment is persuasion. Containment at scale requires a gate — and a ledger.

Risk Ledger Metrics you can actually price

Here’s the decision-grade set that turns governance into measurable exposure.

A) Prevented loss signals

- Refused high-risk intents (count, rate, trend) by action type and environment

- Top refusal reason codes (what keeps trying to happen)

- Repeat offender patterns (same actor/system repeatedly attempting out-of-policy actions)

B) Accepted risk signals

- Supervised override volume (how often policy is bypassed with approval)

- Override concentration (a few approvers rubber-stamping = risk)

- Override reasons taxonomy (emergency vs convenience)

C) Control maturity signals

- Policy version drift (changes in refusal distribution after policy updates)

- Coverage % of high-risk actions (what fraction of “commit events” are governed)

- Time-to-decision at the gate (latency + operational reality)

D) Exposure map (the one insurers never get today)

- Governed vs ungoverned workflows (true surface area of uncontrolled execution)

The Risk Ledger turns action risk from a story into a dataset.

A reason-code taxonomy you can standardize

You don’t need prompts or model internals to underwrite action governance. You need repeatable categories that explain why actions were refused or escalated.

A minimal reason-code set that travels across legal, finance, healthcare, and infrastructure:

- AUTHORITY — missing/invalid delegation or consent

- SCOPE — wrong matter/account/jurisdiction/environment

- ROLE — actor role not permitted for the action type

- SUPERVISION REQUIRED — policy requires named approval

- DATA CLASSIFICATION — restricted destination/channel given sensitivity labels

- SAFETY / POLICY — explicitly prohibited action class

- INTEGRITY / CONTEXT ERROR — missing anchors, malformed payload, dependency unreachable (fail-closed)

Underwriting doesn’t require perfect prediction. It requires provable authority decisions at the moment of action.

What changes when a Risk Ledger exists

Without a ledger (today’s default)

- Incidents are reconstructed.

- Root cause is disputed.

- “We had controls” is a claim.

- Premiums are guesswork.

- Exclusions expand.

With a ledger (underwriteable action risk)

- Near-misses become evidence.

- Overrides become accountable.

- Policy enforcement is provable.

- Governance becomes measurable.

- Insurance becomes rational.

Logs tell you what happened. Ledgers prove what was prevented.

Procurement clause insurers can paste into underwriting requirements

Risk Ledger Requirement (Underwriting):

The insured shall front designated high-risk workflows, including AI-enabled workflows where applicable, with an execution-time authority gate capable of approve/refuse/supervised override decisions and shall retain a tenant-owned, append-only Risk Ledger of sealed decision artifacts for each governed attempt, including policy versioning, reason codes, and named accountability for supervised overrides.

FAQ for insurers (the objections you’re already thinking)

Does this require reading prompts or model internals?

No. A defensible ledger is primarily metadata + authority context + sealed verdicts.

Is this just “better logging”?

No. Logs are forensics. A ledger is execution-time authority evidence.

What about bypass?

A ledger must come with a coverage map. Governed workflows must be non-bypassable when wired. Anything not wired is explicitly out of scope.

What if policy is wrong?

Great — then the ledger shows policy behavior over time. Underwriting improves because you can measure drift, overrides, and corrective action.

If an organization cannot produce a Risk Ledger,

it cannot produce decision-grade evidence of prevention — only recovery.