Thinking OS™ | The Arrival of Refusal Infrastructure for Legal AI and Regulated Industries

AI in law isn’t breaking because it’s weak.

It’s breaking because nothing is structurally stopping it from acting.

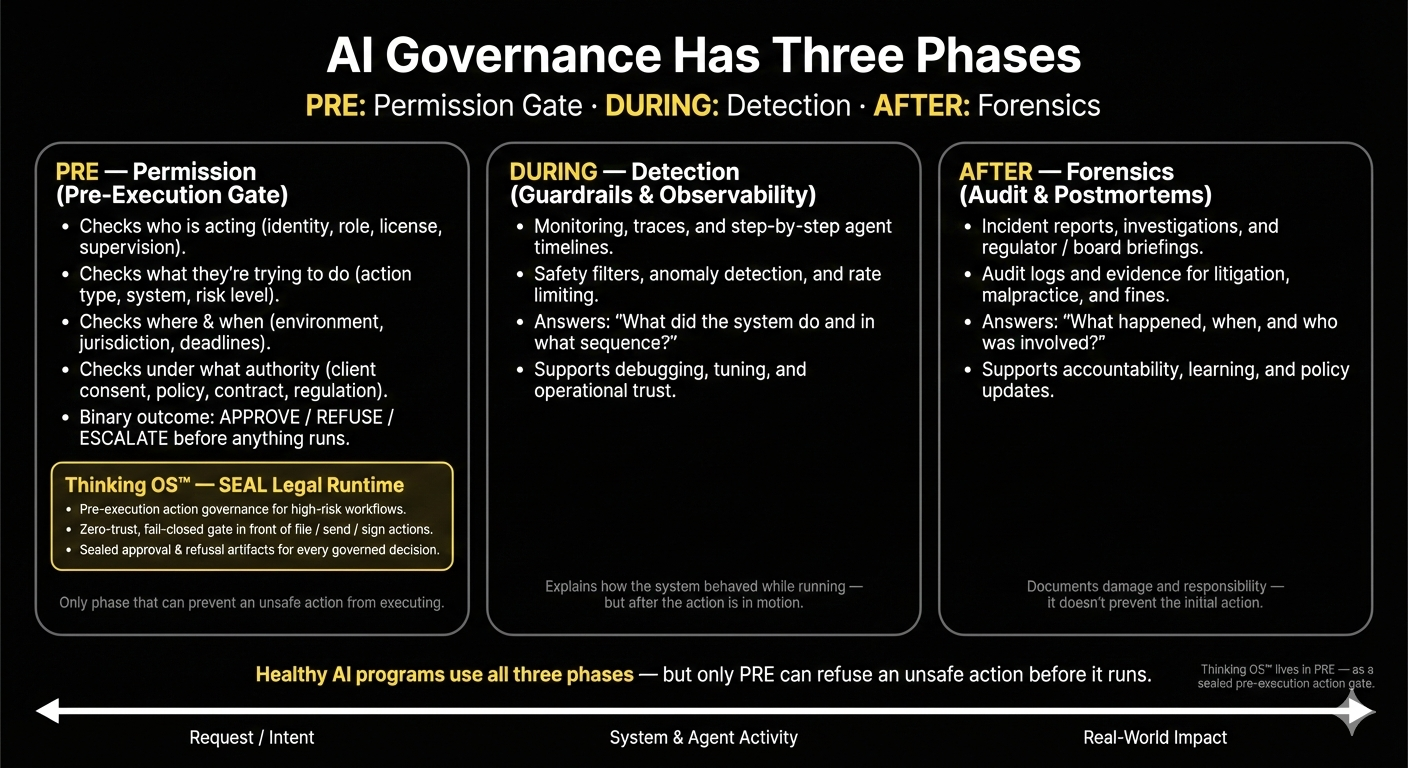

Most “AI governance” in firms now lives in three places:

- Data perimeter – “no client data in public LLMs.”

- Model guardrails – testing, red-teaming, hallucination controls.

- Logs & policies – usage policies, playbooks, after-the-fact audits.

All necessary.

None of them can actually stop a bad filing from going out the door.

What’s been missing is a layer that doesn’t ask “what did the model say?” but instead asks:

“May this action execute at all?”

That missing discipline is

Action Governance.

The architecture that implements it is

Refusal Infrastructure for Legal AI.

Thinking OS™ is that layer.

From Chatbots to Agents: Why This Became Required

In 2023, the risk was a chatbot saying something embarrassing.

In 2026, the risk is an AI-assisted workflow that can:

- file a motion,

- send a notice to the wrong regulator,

- approve a settlement, or

- move client money — before anyone with real authority has a chance to say no.

“Guardrails” are suggestions to a model.

In a courtroom,

“we suggested the AI behave” is not a defense.

You need a gate that can prove the system was

not allowed to act.

What Refusal Infrastructure Does

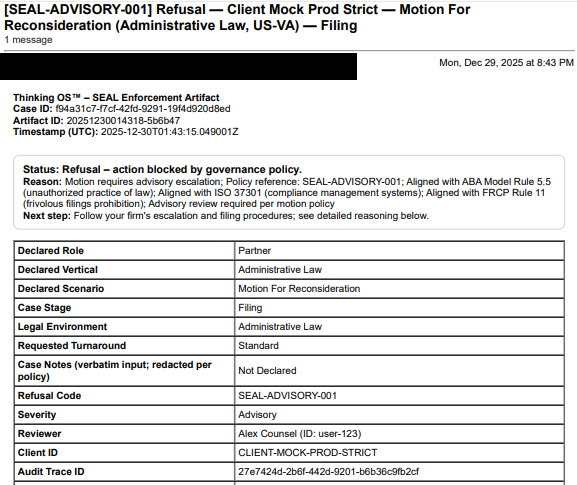

Refusal Infrastructure for Legal AI is a sealed governance layer in front of high-risk legal actions — file, send, approve, move.

For every attempt to take one of those actions, it asks a single structured question:

“Is this specific person or system allowed to take this specific action,

in this matter, under this authority, right now — yes or no?”

It does not draft, summarize, or advise.

At runtime it only does three things:

- ✅ Approve – the action may proceed under configured rules.

- ❌ Refuse – the action is blocked and does not execute.

- 🟧 Route for supervision – a named human has to sign off.

Every decision produces a sealed, tamper-evident artifact your firm controls.

That’s the difference between describing your governance and

proving which actions were allowed to run.

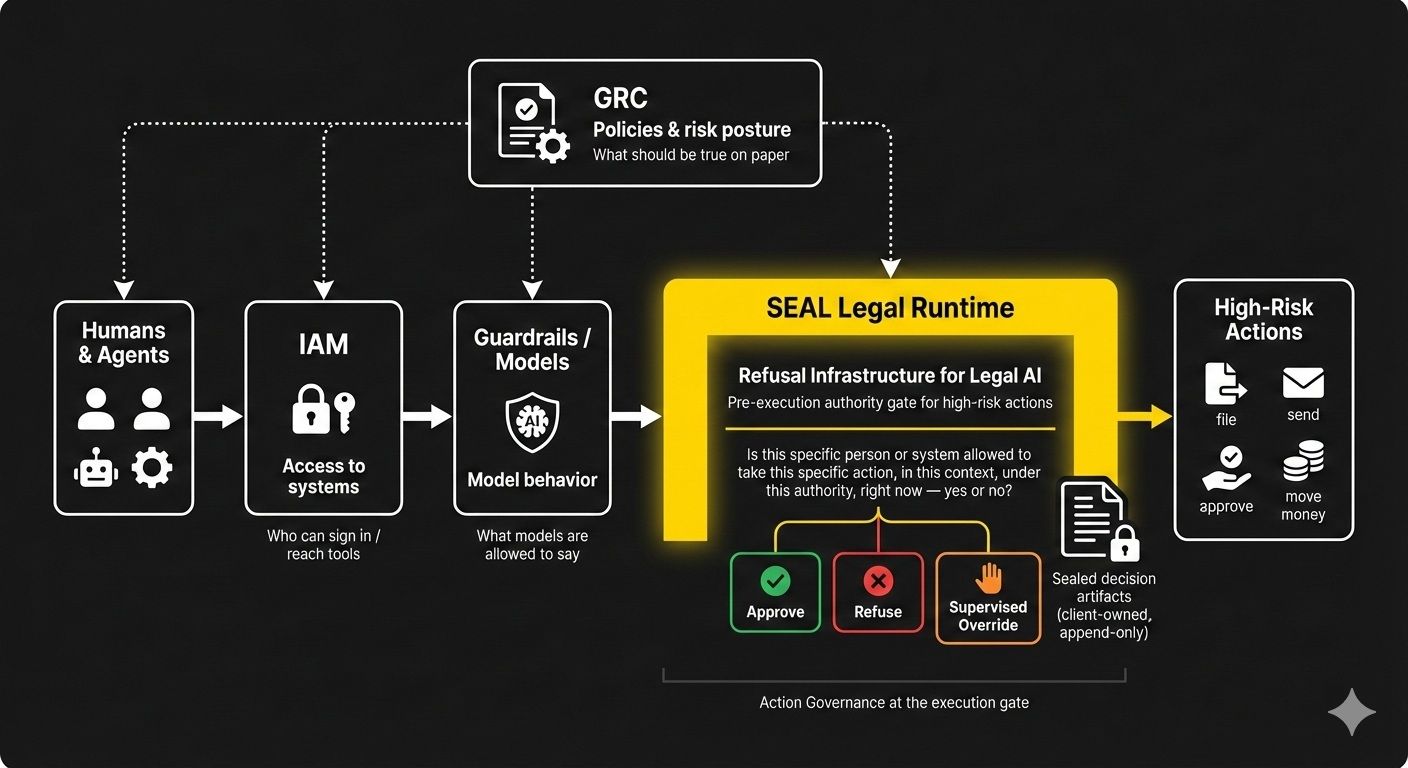

Where Thinking OS™ Sits in the Stack

Refusal Infrastructure doesn’t replace your existing controls — it sits on top of them at the execution boundary:

- Upstream of output – before AI-assisted or human-initiated actions are filed or sent.

- Downstream of identity and policy – after roles, verticals, and matter policies are defined in your GRC and IAM systems.

- Inside wired workflows – non-bypassable for any legal workflow routed through it.

In that position it works alongside:

- IAM – who can sign in and reach tools.

- Guardrails / model safety – what models are allowed to say.

- GRC / policy platforms – what should be true on paper.

Thinking OS™ adds the missing verdict:

“Given this actor, this context, and this authority — may this action execute right now: allow / refuse / escalate?”

SEAL Legal Runtime: The Implementation in Law

In our world, that gate is the SEAL Legal Runtime — Thinking OS™’s sealed enforcement layer for law.

In wired legal workflows:

- Every high-risk step passes through a pre-execution authority gate.

- Requests that are out of scope, missing consent, or outside approved verticals are refused, not silently passed.

- Each governed decision leaves behind a client-owned, tamper-evident artifact designed for regulators, insurers, and courts — without exposing matter content or model prompts.

SEAL does not practice law, draft documents, or replace attorney judgment.

It enforces the boundaries your leadership defines and proves what it allowed or blocked.

Why Thinking OS™ Exists

You can govern data.

You can guardrail models.

You can log what happened.

But in legal, the only thing that really counts is what was allowed to leave the building under your seal.

Thinking OS™ exists to govern that moment.

Not as another assistant, not as another feature — but as Refusal Infrastructure for Legal AI:

- a pre-execution authority gate in front of high-risk actions,

- enforcing Action Governance at runtime,

- with sealed evidence for every yes, no, and supervised override.

If your stack has models, guardrails, and logs but no pre-execution authority gate, there is still nothing structural standing between AI-assisted work and the real world.