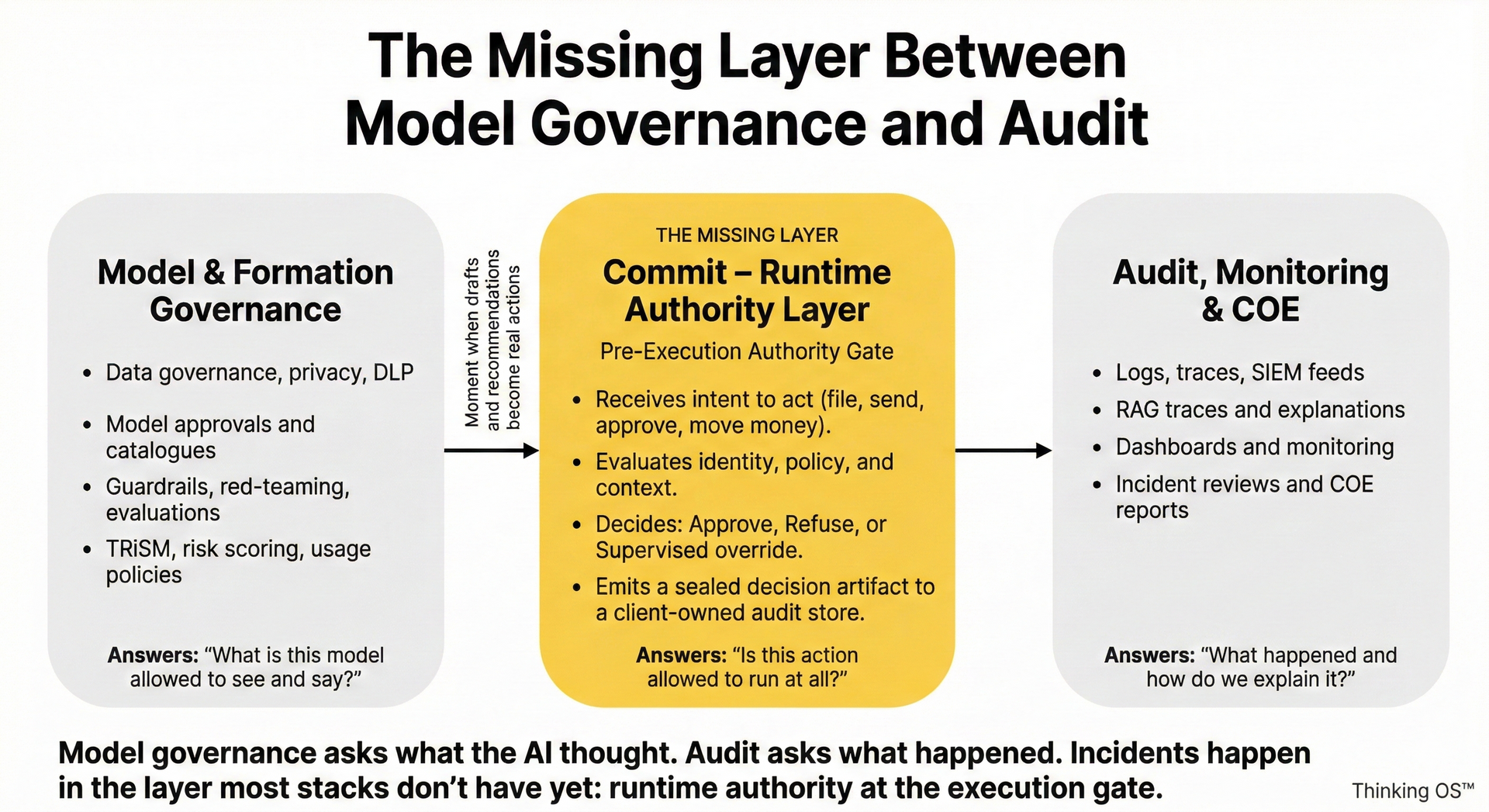

The Missing Layer Between Model Governance and Audit

Why You Still Get AI Incidents Even When Both Look “Mature”

Most AI governance stories today have two strong pillars:

- Model governance – what data the model can see, how it’s evaluated, how “safe” its outputs are.

- Audit and monitoring – logs, traces, dashboards, COEs, post-incident reviews.

If you have both, it sounds like you’re covered.

Yet the uncomfortable pattern is the same across incidents:

The model behaved “within spec.”

The logs were there.

And something still hit the real world that should never have been allowed to run.

The problem isn’t a lack of visibility or evaluation.

The problem is the

missing layer between model governance and audit:

a

runtime pre-execution authority layer that decides which actions may execute at all.

This article is about that gap—and what has to live there.

1. The Two Layers Everyone Already Has

Most serious organizations now have some version of:

1) Model & Formation Governance

- Data governance, privacy, DLP

- Model approvals and catalogues

- Safety guardrails, red-teaming, evals

- TRiSM / risk scoring, usage policies

Question it answers:

“What is this model allowed to see and say?”

2) Audit, Monitoring & COE

- Logs, traces, SIEM feeds

- RAG traces, “why did the model answer this way?”

- COE / incident reports

- Forensic reconstruction after something went wrong

Question it answers:

“What happened and how do we explain it?”

Both are necessary.

Neither answers the question regulators, boards, and courts actually ask after an AI-mediated incident:

“Who was allowed to let this action happen, under what authority, and where is your proof?”

That question doesn’t live in the model, and it doesn’t live in the logs.

It lives in the layer you don’t have yet.

2. The Missing Middle: Commit – Runtime Authority

Between “what the AI model said” and “what the logs show after the fact” there is a single critical moment:

The moment a draft, recommendation, or agent plan tries to become a real action.

File. Send. Approve. Move money. Delete. Deploy. Notify a regulator. Change a record.

That moment is where AI governance either exists or collapses.

The missing layer is what we call the Commit – Authority layer:

- A pre-execution authority gate that receives an intent to act

- Evaluates it against identity + policy + context

- Returns only three possible decisions:

- ✅ Approve – action may run

- ❌ Refuse – action is blocked

- 🟧 Supervised override – action may run only with named human responsibility

- Emits a sealed artifact of that decision into a client-owned audit store

In other words:

- Model governance → “What did the AI system think?”

- Commit / authority gate → “Is it allowed to act?”

- Audit → “What did it actually do and what can we prove later?”

Without the middle layer, model governance and audit form a loop around the real problem without ever constraining it.

3. Why Model Governance + Audit Still Let Bad Actions Happen

You see the same failure pattern in every sector:

- Model behaves “correctly” given its inputs.

Guardrails are in place, evals look fine. - IAM says the caller is allowed to use the system.

Credentials check out; role has broad permissions. - Automation or agent uses the model’s output to trigger a high-risk action.

A filing, an approval, a destructive operation. - Monitoring sees the event after it happens.

Sometimes seconds later, sometimes days. - Incident review concludes: “User error / misconfigured role / process gap.”

Nothing in that chain ever asked:

“Is this specific actor allowed to take this specific action, in this context, right now—yes, no, or escalate?”

Everyone was staring at capability (what the model did) and telemetry (what happened after), instead of authority.

4. What a Real Runtime Authority Layer Has to Do

To actually close the gap between model governance and audit, the missing layer needs a few non-negotiables:

1. Pre-execution, not advisory

- It sits in front of high-risk actions (file / send / approve / move), not beside them.

- If the gate says no, the action does not run. There is no “best effort.”

2. Authority-centric, not model-centric

- It doesn’t care how the draft was created—partner, junior, or LLM.

- It only cares:

Is this actor allowed to do this thing here, now, under these rules?

3. Operator-agnostic

- Humans, agents, services all hit the same gate.

- No “AI shortcut lane” around the controls.

4. Fail-closed by design

- Unknown role, missing consent, ambiguous jurisdiction → refusal, not silent pass.

5. Client-owned rules

- Authority logic is derived from your GRC, ethics rules, risk appetite, and matter / account systems.

- Not invented in a vendor’s opaque rules engine.

6. Client-owned evidence

- Every approve / refuse / supervised override generates a sealed, append-only artifact in your audit store.

- Enough structure to answer: who, what, where, under which authority, decided what, when.

If any of those are missing, you don’t have the missing layer.

You have more observability around an unconstrained system.

5. Where AI Governance Platforms Stop

AI governance platforms are doing important work:

- inventories of models and agents

- policy mapping to EU AI Act, NIST AI RMF, ISO 42001

- risk workflows and attestations

- monitoring and reporting

But almost all of them live in two places:

- Before the model runs (policies, approvals, catalogues)

- After the fact (monitoring, dashboards, audits)

Very few are:

- sitting directly in front of high-risk actions, and

- returning approve / refuse / supervised decisions, and

- emitting client-owned decision artifacts per action.

So when someone says:

“Our AI governance platform enforces policies at runtime.”

The questions for a GC / CISO are:

- At which exact point in the workflow can it hard-refuse a filing / payment / approval?

- Whose rules is it enforcing?

- Who owns the artifacts that prove what it allowed or blocked?

If the answers are vague, you’re looking at

model governance + audit, not the missing middle.

6. How SEAL Legal Runtime Fills the Gap (Legal Example)

In law, once you’ve:

- filed with a court,

- sent to a regulator,

- disclosed to a counterparty,

you cannot “un-file” or “un-send.”

SEAL Legal Runtime, built on Thinking OS™, is designed specifically as this missing layer for legal AI:

- Sits between internal tools / AI / DMS / e-filing and the outside world.

- Receives intent-to-act payloads:

- who is acting (from IdP / org chart)

- on which matter and venue

- what they’re trying to do (motion type / action type)

- how urgent

- under which client / regulatory authority

- Evaluates that against firm-owned rules from GRC, ethics, and matter systems.

- Returns approve / refuse / supervised override.

- Emits sealed decision artifacts into a firm-controlled audit store.

Model governance tools can still manage:

- which models are allowed,

- what data they see,

- how their outputs are evaluated.

Audit and monitoring can still:

- observe performance,

- support investigations,

- power COE reviews.

SEAL simply adds:

“No high-risk legal action runs without passing a pre-execution authority gate we control—

and we own the evidence of every decision.”

That’s the missing layer.

7. Questions You Can Use Tomorrow

If you’re a GC, CISO, risk leader, or partner reviewing AI governance claims, these will surface the gap quickly:

- “Show me the exact point in this workflow where a filing / payment / approval can be refused before it executes.”

- “Whose rules are enforced there—and where do they live? In our systems, or yours?”

- “When that gate says NO, what record do we get? Who owns it? Where is it stored?”

- “If your platform disappeared tomorrow, would we still know who was allowed to do what, and under which authority?”

If the answers live only in model governance and audit dashboards, you’ve just found the missing layer between them.

And that—Commit, runtime authority at the execution gate—is where Thinking OS™ chooses to live.