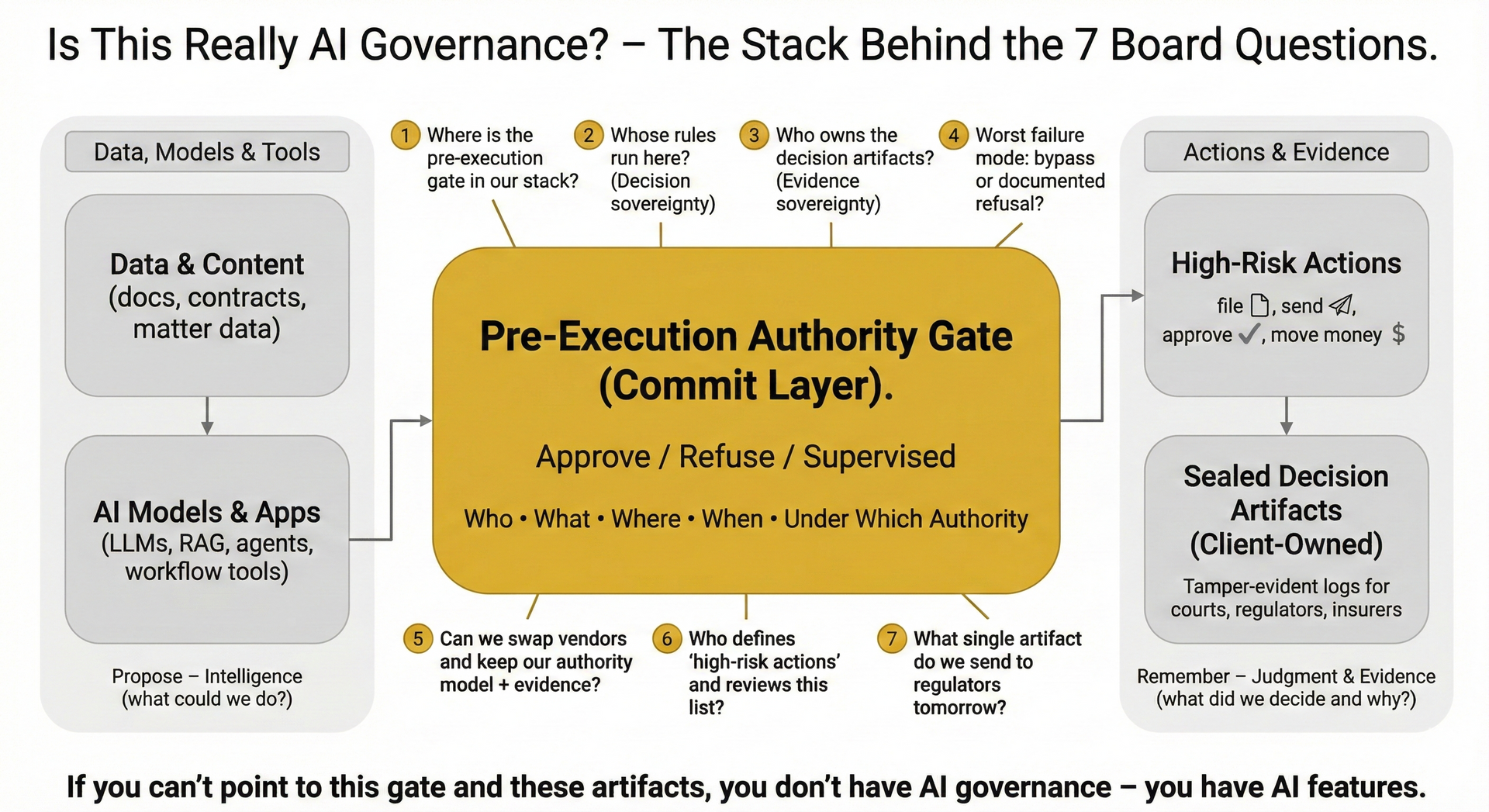

Is This Really AI Governance? 7 Questions Boards Can Ask in One Meeting

Most “AI governance” decks sound impressive but leave one blind spot:

Who is actually allowed to do what, where, under which authority, before anything executes?

These seven questions let a board test, in one meeting, whether the organization has real governance or just model settings and policies on paper.

1. Where is the pre-execution gate in our AI stack?

“Show me, on one diagram, where we decide whether an AI-driven action may run at all.”

- Look for a clearly defined authority gate in front of high-risk actions (file, send, approve, move money, change records).

- Good answer: “Here is the runtime that says approve / refuse / supervised before anything leaves the building.”

- Red flag: “The system logs everything and we can always audit it later.”

2. Whose rules run there? (Decision sovereignty)

“At that gate, whose rules are we enforcing – ours, or the vendor’s?”

- Good answer: firm-owned policies (roles, risk posture, matter rules) are loaded into the gate; vendor logic is subordinate.

- You want to hear: “If we change our policy tomorrow, the gate changes tomorrow.”

- Red flag: “The vendor’s generic ‘safety layer’ decides what’s allowed.”

3. Who owns the artifacts? (Evidence sovereignty)

“When the gate allows, refuses, or escalates, who owns the record of that decision?”

- Good answer: client-owned, tamper-evident artifacts that record who tried to do what, under which policy set, and why it was approved or blocked.

- Those artifacts should live in your environment and be exportable for regulators, courts, and insurers.

- Red flag: “The logs live in the vendor’s SaaS; we can request reports if needed.”

4. What is the worst-case failure mode: bypass or documented refusal?

“If something goes wrong, is it because the gate was bypassed, or because it refused and we ignored it?”

- Healthy design fails closed: the default is “no,” with a documented refusal or supervised override.

- You want to hear: “If the gate is down or uncertain, the action does not execute.”

- Red flag: “If the AI is confident, it just proceeds; we review afterwards.”

5. Can we swap vendors and keep our authority model and evidence?

“If we change AI or workflow vendors, what survives?”

- Good answer: your authority model (who may do what, where) and your decision artifacts are portable and remain intact if you change model providers or UI layers.

- This is the test for true control-plane vs. vendor-plane separation.

- Red flag: “If we move off this platform, we lose the policies, logs, and approvals history.”

6. Who defines “high-risk actions,” and how often is that list reviewed?

“Show me the current list of actions that cannot run without explicit authorization.”

- Good answer: a board-visible catalog of high-risk actions (by system, data class, and destination) tied to the gate – updated as the business changes.

- You want clear ownership: “This committee updates the list; the gate enforces it.”

- Red flag: “Everything is treated the same; the model just has general guardrails.”

7. If a regulator or opposing counsel calls tomorrow, what single artifact do we send first?

“Walk me through the evidence we would rely on to prove that an AI-assisted action was authorized and in-policy.”

- Good answer: a sealed decision artifact showing actor, action, matter, policy set, verdict (approve/refuse/supervised), and reasons.

- The board should see that this artifact is standardized and repeatable, not manually assembled after the fact.

- Red flag: “We’d pull logs from several systems and reconstruct what happened.”

What “Yes” Looks Like

If your team can answer these seven questions crisply, with one diagram of the pre-execution authority gate and examples of client-owned decision artifacts, you’re in real AI governance territory.

If they can’t, you don’t have an AI problem.

You have an

action governance gap – and that’s where the real legal and fiduciary risk lives.

If you can’t point to a pre-execution authority gate and show who owns the artifacts it emits, you don’t have AI governance – you just have AI hope.