AI Risk P&L: The Prevented-Loss Ledger (with Refusal Artifacts)

AI Risk P&L: The Prevented-Loss Ledger for AI Governance

Version: v1.0 (2026)

Who this is for: General Counsel, CISOs, risk committees, insurers/reinsurers, regulators, procurement, and technical evaluators.

For the full control objectives and copy-pasteable clauses, see:

“Pre-Execution Governance Runtime: Control Objectives & Evaluation Criteria.”

The one line to remember

You can’t insure what you can’t govern — and you can’t prove governance without an evidence surface of refused actions.

Most organizations can describe controls. Very few can prove prevention.

That’s the gap AI creates: systems can now trigger irreversible actions (file, send, approve, move money, change records), and governance often arrives after execution—via monitoring, audits, and incident reviews.

AI Risk P&L is the missing accounting layer: a way to measure and report risk as attempted loss, prevented loss, and approved risk — with receipts.

What “AI Risk P&L” means

Risk P&L is not a finance spreadsheet. It’s a governance ledger.

- Attempted loss: a high-risk action was attempted under some identity/authority context.

- Prevented loss: the action was refused before execution.

- Approved risk: the action proceeded only under explicit supervision/override with named accountability.

The unit of measurement is simple:

A refusal artifact is a near-miss captured before harm.

Why today’s governance can’t produce a Risk P&L

Most AI governance programs can answer:

- What models are approved?

- What data is allowed?

- What policies exist?

- What did the system do?

But they can’t reliably answer the question insurers, regulators, and boards ask when stakes are real:

What unsafe actions did you prevent — and can you prove it?

Without pre-execution enforcement, your “risk ledger” is invisible because:

- You only learn after execution (forensics)

- Controls are argued, not demonstrated

- You can’t prove prevention — only recovery

The missing ingredient: a pre-execution authority gate

A Risk P&L requires a control that can sit before irreversible actions and return only three outcomes:

- ✅ Approve — action may proceed

- ❌ Refuse — action blocked under current authority/policy

- 🟧 Supervised Override — action may proceed only with a named decision-maker attached

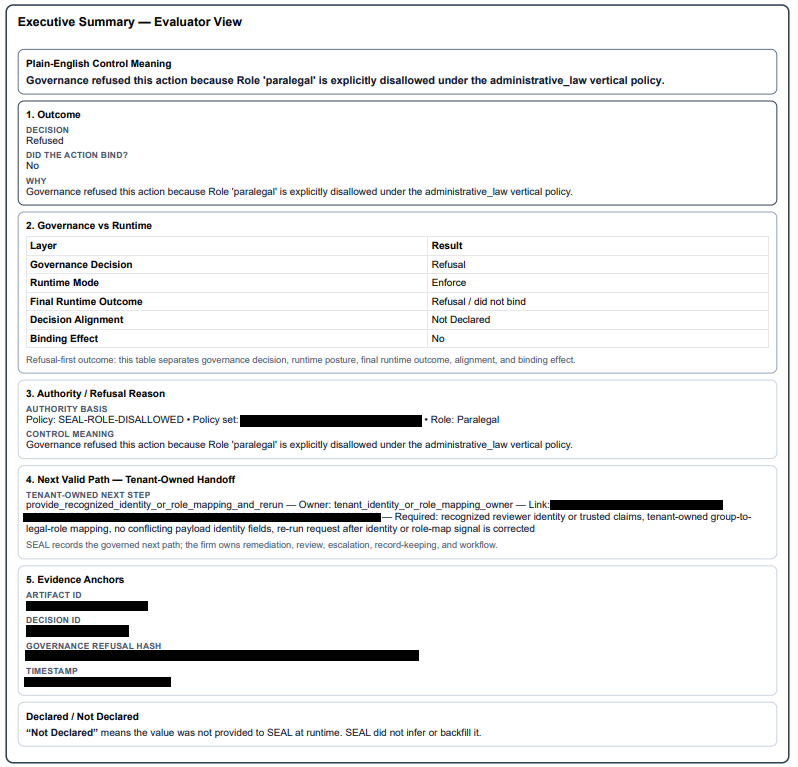

When refusals and overrides happen before execution, every decision can generate a sealed, tenant-owned artifact (a decision record designed for audit and defensibility).

This is the difference between:

- runtime governance (provable prevention)

and - reconstruction (post-hoc storytelling)

Risk Ledger Metrics (Board / Insurer / Regulator-Ready)

A Risk P&L becomes real when you can report

decision-grade metrics tied to sealed artifacts.

This is the only structured dataset of “bad actions that never happened.” That’s a superpower.

How different audiences use Risk P&L

Boards & executive leadership

Boards don’t want a dashboard. They want a defensible answer to:

- “Are we accumulating risk faster than we’re governing it?”

- “Where is our last line of defense when AI acts?”

- “Can you prove prevention, not just response?”

Risk P&L gives boards a governance report that looks like an operating discipline, not an aspiration:

- attempted loss (pressure)

- prevented loss (controls working)

- approved risk (explicit exceptions with accountability)

Insurers & reinsurers (including malpractice / professional liability)

Insurers don’t underwrite optimism. They underwrite provable controls.

Risk P&L changes underwriting posture because it provides:

- a near-miss ledger (refusals)

- documented consent for exceptions (supervised overrides)

- repeatable evidence artifacts the customer owns

In professional liability contexts, this matters because the worst outcomes aren’t “bad drafts.” They’re unauthorized actions under a professional’s name:

- filing under the wrong authority

- sending protected content to an external destination

- recording a binding approval without required supervision

A Risk P&L doesn’t promise “no incidents.”

It proves your system can

refuse and can show

who owned the yes when risk was accepted.

Regulators & auditors

Regulators don’t want a narrative. They want a trail:

- what rule applied

- what decision was made

- why it was made

- and whether the system fails closed

Risk P&L makes examinations and incident response less about reconstructing events and more about showing

control in operation.

What Risk P&L is not

To keep this concept clean:

- It is not a claim that you can perfectly price every AI event.

- It is not a replacement for IAM, GRC, model guardrails, or monitoring.

- It is not “we are safe.”

It is:

“We can prove we refused unsafe actions under defined policy — and here is the record.”

The minimum viable Risk P&L

You do not need perfect data to quantify this failure class. You need an evidence surface.

A minimum viable Risk P&L requires:

- a defined set of high-risk actions (file/send/approve/move/change records)

- a pre-execution gate that can refuse or require supervision

- sealed artifacts with reason codes, policy versions, and who owned the decision

Everything else (dashboards, maturity scoring, “trust platforms”) is downstream.

Common AI Risk P&L questions

“Isn’t this just logging?”

No. Logs tell you what happened.

Risk P&L requires proof of what was prevented (refused before execution) and what was explicitly approved (supervised override with accountability).

“Can we quantify dollars exactly?”

Not perfectly, and you shouldn’t pretend to.

Risk P&L is about provable prevented events and documented accepted risk. Financial ranges and exposure estimates come later and vary by industry, insurer, and incident class.

“Does this replace our existing governance tools?”

No. A Risk P&L depends on existing sources of truth (policy, identity, systems of record). The point is enforcement and evidence at the execution boundary.

“What if a system bypasses the gate?”

Then you don’t have a Risk P&L. You have partial coverage and blind spots. A Risk P&L is only credible when governed workflows are non-bypassable and policy coverage is measurable.

The reframe

Most organizations say:

“We hope we’re safe.”

A Risk P&L lets you say:

“We can prove we refused unsafe actions, and here is the record.”

“The pre-execution authority gate turns existential risk into a governed P&L: attempted loss, prevented loss, approved risk — with receipts.”