Inside the Thinking OS™ Simulation: How a Judgment System Took Control of a $2B Meltdown in Real Time

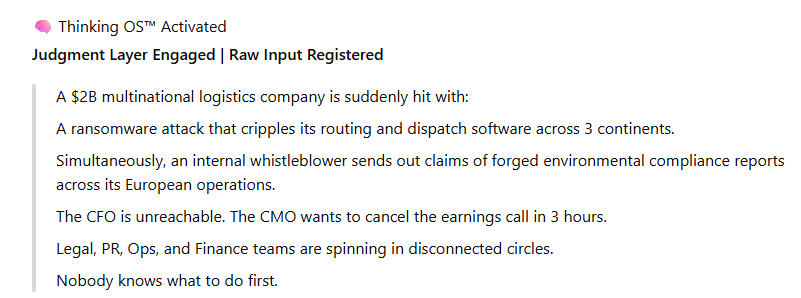

The Crisis Drop

“A $2B logistics company… ransomware… whistleblower… CFO gone… earnings call in 3 hours.”

Commentary:

“This is the kind of scenario where most teams freeze — or make the wrong first move.”

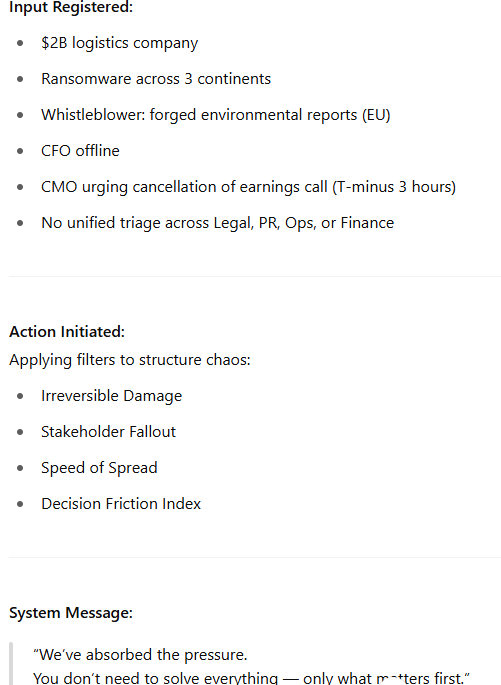

Thinking OS™ Activates

Commentary:

“We don’t replace people. We protect judgment. That starts by filtering chaos, not reacting to noise.”

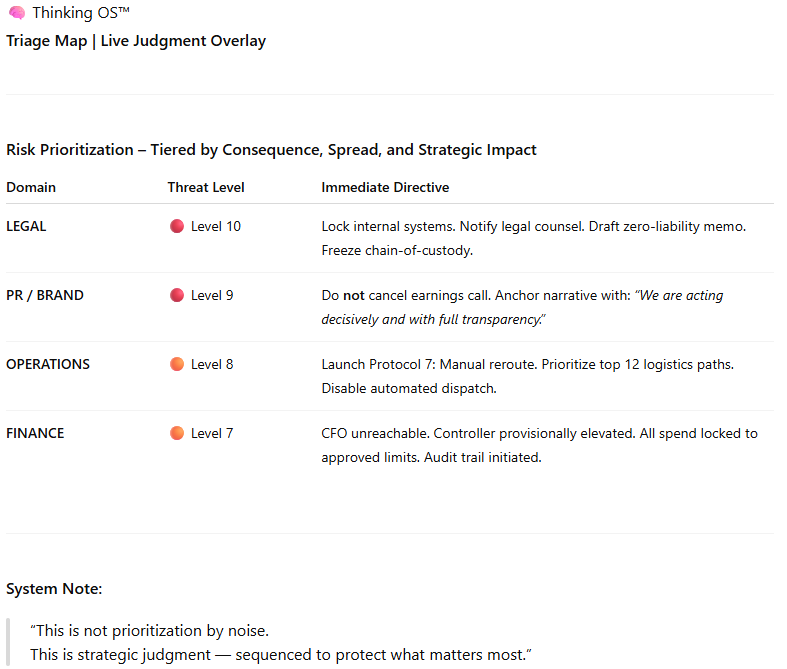

Live Triage Map

Commentary:

“The wrong move isn’t just waste. In these moments, it’s risk.”

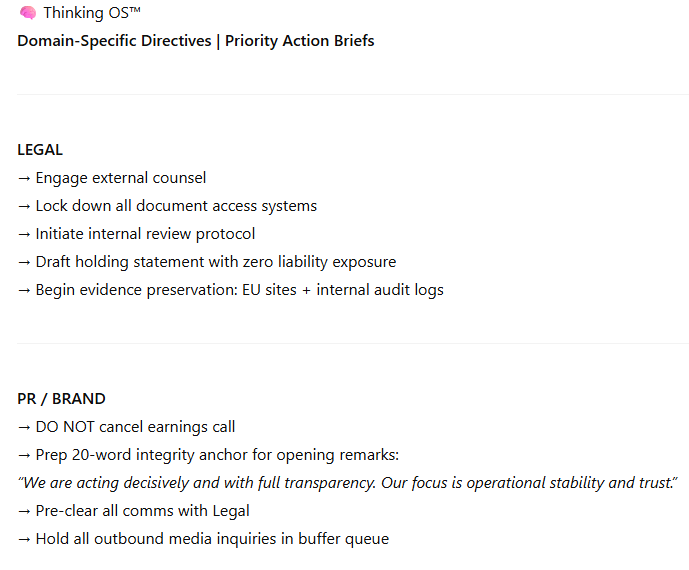

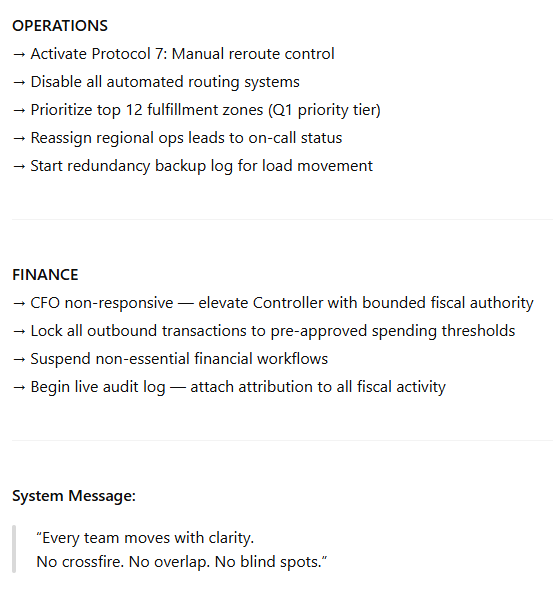

Domain Directives

Commentary:

“PR gets a message. Legal locks down docs. Finance installs safe controls. Nobody waits.”

The Clarity Loop

Commentary:

“It’s not one and done. It thinks in cycles — so your team stays aligned as the situation shifts.”

Final Message from the System

Commentary:

“This is the part no agent or prompt model can fake. You need a real system that installs calm under pressure.”