AI Governance: The Control Layer Is Already Shifting

Why It Matters Now

The governance layer isn’t arriving. It already moved.

Most AI teams still think governance means:

- Better filters

- Stronger guardrails

- Tighter access control

- Smarter policy engines

But none of that governs the thing that actually moves AI systems: Reasoning itself.

We’re in a new era—where AI can:

- Instantiate cognition upstream of output

- Rewire its own reasoning under pressure

- Overrule filters through subtle logic drift

And right now, almost no one is governing the layer before that happens.

The AI Governance Misunderstanding

Most people think governance happens at the boundary:

- Between model and user

- Between code and infrastructure

- Between decision and audit

But AI doesn’t wait for those boundaries. It executes in milliseconds, under pressure, and with no inherent refusal layer.

That’s why:

- Guardrails fail

- Prompts mutate

- Memory drifts

- And hallucinations happen

Not because the systems are too smart. But because nothing told them,

“This logic doesn’t belong here.”

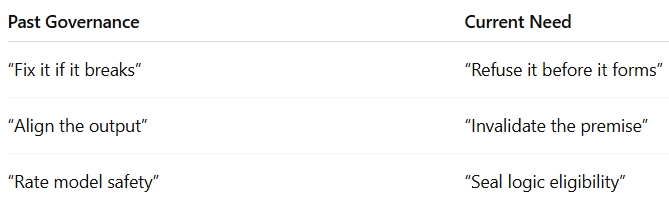

The Shift From Old AI Governance

Here’s what’s actually happening:

This is not a tooling upgrade. It’s a paradigm fracture.

AI governance is shifting from:

“What should we allow the system to say?” to “What logic should never be permitted to run at all?”

Why Thinking OS™ Exists

Thinking OS™ doesn’t optimize, orchestrate, or recover.

It governs whether cognition is allowed to form in the first place— Before inference, before tokenization, before any action is triggered.

It doesn’t protect a system. It decides if the system should be allowed to think.

And nothing in the current AI ecosystem answers that.

The Implication

This is not an alignment problem. This is a control infrastructure vacuum.

The question is no longer:

“How do we build safer AI?”

The question is:

“Why did that logic ever get built?”

Thinking OS™ is the first and only system that installs refusal at the judgment layer— before AI forms reasoning. Before bad logic enters the world.

Not because it’s philosophical. Because it’s operationally necessary.

The Bottom Line

You don’t need to believe the world is ending. You just need to know this:

Refusal isn’t a feature. It’s the missing infrastructure.

And if you don’t govern what shouldn’t compute, You are already too late.